The upcoming fusion of the real world and virtual information creates a strong need for new ideas and techniques for dealing with virtual information items. This especially holds in instrumented environments, which aim at the seamless integration of virtual digital objects and applications into physical environments. A new technique allowing the intuitive handling of such information items is the wiping interaction technique as presented on this page. By executing a physical wiping gesture the user can easily and intuitively move digital information items between interaction areas within range of sight.

There are already various interaction metaphors and techniques for the direct handling and manipulation of digital data. They can be classified by their application radius: The "Drag & drop" technique as known from desktop computers is feasible to move objects like files or windows within a monolithic user interface. "Pick and Drop" [Rekimoto, 1997], Hyperdrag [Rekimoto and Saitoh, 1999], "Shuffle, Throw or Take and Put" [Geißler, 1998] extend this idea to carry information objects beyond system borders by the use of real world container objects. The information items are placed on pointers or container objects and can be dropped onto the target system later. Although this concepts already cover many situations, they are still limited to setups and situations where the target system is within reach of the user.

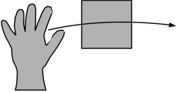

The interaction technique of "wiping" developed by the FLUIDUM research group

allows moving virtual information items to systems beyond the reach of the

user. The technique of wiping is based on the motion which is for instance

executed to wipe crumbs from a table. The intuition is that information items

wiped independently continue moving towards a certain target system even after

the initial gesture has ended. Potential target system then "catches" the

moving information items, like crumbs stop falling if they have reached the

floor.

The interaction technique of "wiping" developed by the FLUIDUM research group

allows moving virtual information items to systems beyond the reach of the

user. The technique of wiping is based on the motion which is for instance

executed to wipe crumbs from a table. The intuition is that information items

wiped independently continue moving towards a certain target system even after

the initial gesture has ended. Potential target system then "catches" the

moving information items, like crumbs stop falling if they have reached the

floor.

The wiping interaction technique has some interesting properties. First of all, nearly the same gesture can be used in virtual applications (through mouse or touch screen input) as well as in real world applications (through hand gestures or by arbitrary interaction artifacts). Another feature is that only one gesture is required to select the objects that should be manipulated and to execute the action itself. Thus efficient interaction without the need of special selection gestures is possible. A third characteristic is that wiping can be observed by a variety of different sensors: Mice, touch screens, acceleration sensors in artifacts, cameras and computer vision techniques, and any other positioning system with sufficient spatial and temporal resolution.

While photo prints can be comfortably distributed and showed around sitting

altogether at a large table with many people, handling digital images on a

computer is somehow cumbersome and impersonal with a large crowd.

While photo prints can be comfortably distributed and showed around sitting

altogether at a large table with many people, handling digital images on a

computer is somehow cumbersome and impersonal with a large crowd.

Instrumented rooms like our FluidRoom allow to combine both, the advantages of the real world with the flexibility of digital information items. In our photo example, digital images can be displayed onto an ordinary table surface by the use of projectors. Like prints they can be moved around, turned and looked at by many people at the same time. Attendants can wipe images from their personal devices onto the table, and after the slideshow wipe (copy) new fotos back onto their devices. Images that may be interesting for all can be wiped onto a wall to collectively select images for a slideshow without leaving the desk or using any further devices. The figure shows an image of our prototype of an instrumented photo desk as shown on the CeBIT fair in 2004.

A wiping gesture is defined by its wiping path and timing diagram. The path denotes the spatial characteristics of the gesture within the interaction plane. The timing diagram shows the acceleration, execution speed and deceleration behavior of the gesture.

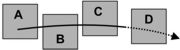

The wiping path is analyzed for two reasons: First it serves to determine

the objects that should be manipulated by wiping. Those are all the objects

that are crossed by the path. You could imagine that while executing the wiping

gesture the objects crossed are accumulated under the interaction artifact

like scrubs on a table are collected by the palm. Second the wiping gesture

is used to determine the direction in which to move the collected items. This

is the direction given by the motion of the interaction artifact at the end

of the gesture.

The wiping path is analyzed for two reasons: First it serves to determine

the objects that should be manipulated by wiping. Those are all the objects

that are crossed by the path. You could imagine that while executing the wiping

gesture the objects crossed are accumulated under the interaction artifact

like scrubs on a table are collected by the palm. Second the wiping gesture

is used to determine the direction in which to move the collected items. This

is the direction given by the motion of the interaction artifact at the end

of the gesture.

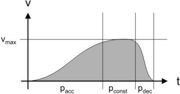

The timing diagram is used to separate consecutive wiping gestures and to

determine their intensity. A wiping gesture is divided into three parts which

can be identified in the timing diagram: The acceleration phase, the constant

movement phase, and the deceleration phase. During the first two phases objects

that should be manipulated may be collected. The deceleration phase signals

the near end of the gesture and is used to detect the target direction through

the wiping path. The intensity of the wiping gesture and respectively the

distance that the information items should me moved is controlled by the maximum

speed reached by the interaction artifact during the constant motion phase.

The timing diagram is used to separate consecutive wiping gestures and to

determine their intensity. A wiping gesture is divided into three parts which

can be identified in the timing diagram: The acceleration phase, the constant

movement phase, and the deceleration phase. During the first two phases objects

that should be manipulated may be collected. The deceleration phase signals

the near end of the gesture and is used to detect the target direction through

the wiping path. The intensity of the wiping gesture and respectively the

distance that the information items should me moved is controlled by the maximum

speed reached by the interaction artifact during the constant motion phase.

For the recognition of wiping gestures in our prototype implementation we used techniques of computer vision. The interaction surface is observed by a video camera. To detect motion in the resulting video stream we use differential picture analysis and conclude the timing diagram and wiping path for potential wiping gestures.